Solution to hard algebra questions for SSC CGL Set 64

Learn to solve 10 hard algebra questions in 12 minutes from SSC CGL Solution Set 64. Learn basic to advanced algebra problem solving techniques.

If you have not yet taken this test you should take it first at,

SSC CGL level question set 64 on Algebra 15.

Quick solutions to 10 hard algebra questions for SSC CGL Set 64 - answering time was 12 mins

Q1. If $a$, $b$, and $c$ are real numbers and $a^2+b^2+c^2=2(a-b-c)-3$ then the value of $(a+b+c)$ is,

- $-1$

- $1$

- $0$

- $3$

Solution 1 - Problem analysis and execution

Applying principle of collection of friendly terms by identifying the key pattern we rearrange the terms in the given expression,

$a^2+b^2+c^2=2(a-b-c)-3$,

Or, $(a^2-2a+1)+(b^2+2b+1)+(c^2+2c+1)=0$,

Or, $(a-1)^2+(b+1)^2+(c+1)^2=0$.

By principle of zero sum of square terms, each of the square terms on the LHS must be zero,

$(a-1)^2=0$,

$(b+1)^2=0$,

$(c+1)^2=0$.

Or,

$a=1$,

$b=-1$,

$c=-1$.

So,

$(a+b+c)=-1$.

Answer: Option a: $-1$.

Key concepts used: Key pattern identification -- Principle of collection of friendly terms -- Principle of zero sum of square terms.

Q2. If $p^4=119-\displaystyle\frac{1}{p^4}$, then the value of $p^3-\displaystyle\frac{1}{p^3}$ is,

- 24

- 36

- 18

- 32

Solution 2 - Problem analysis and execution

The target expression is a subtractive sum of inverses of cubes whereas with a little rearrangement the given expression provides us with the value of sum of 4th power of inverses. We need to reduce the power of sum of inverses of the given expression using the properties of principle of interaction of inverses and again raise the powers of sum of inverses to inverse of cubes.

$p^4=119-\displaystyle\frac{1}{p^4}$,

Or, $p^4+\displaystyle\frac{1}{p^4}=119$,

Or, $p^4+2+\displaystyle\frac{1}{p^4}=121$,

Or, $\left(p^2+\displaystyle\frac{1}{p^2}\right)^2=121$,

Or, $p^2+\displaystyle\frac{1}{p^2}=11$, as squares of $p$ form $p^2+\displaystyle\frac{1}{p^2}$, it is positive.

As $a^3-b^3=(a-b)(a^2+1+b^2)$ the sum of square of inverses can be used by itself with a little modification to form the second factor of the expanded target expression,

$p^2+\displaystyle\frac{1}{p^2}=11$,

Or, $p^2+1+\displaystyle\frac{1}{p^2}=12$.

This is the second factor of the target expression. We need to find the value of the first factor, $\left(p-\displaystyle\frac{1}{p}\right)$ now,

$p^2+\displaystyle\frac{1}{p^2}=11$,

Or, $p^2-2+\displaystyle\frac{1}{p^2}=11-2=9$

Or, $\left(p-\displaystyle\frac{1}{p}\right)^2=9$,

Or, $\left(p-\displaystyle\frac{1}{p}\right)=3$.

As negative value of $p-\displaystyle\frac{1}{p}$ would result in negative value of $p^3-\displaystyle\frac{1}{p^3}$ and choice value set does not have any negative value, negative value is ignored here.

So the value of the target expression is,

$p^3-\displaystyle\frac{1}{p^3}$

$=\left(p-\displaystyle\frac{1}{p}\right)\left(p^2+1+\displaystyle\frac{1}{p^2}\right)$

$=3\times{12}$

$=36$.

Answer: Option b : 36.

Key concepts used: Key pattern identification -- Deductive reasoning -- Principle of interaction of inverses -- Input transformation -- Factorization of sum of cubes.

Q3. If $a+b=1$, then the value of $a^3+b^3-ab-(a^2-b^2)^2$ is,

- $0$

- $-1$

- $1$

- $2$

Solution 3 - Problem analysis and execution

We have to take out factors of $(a+b)$ as many times as possible to simplify the target expression.

$E=a^3+b^3-ab-(a^2-b^2)^2$

$=(a+b)(a^2-ab+b^2)-ab-\left[(a+b)(a-b)\right]^2$

$=a^2-ab+b^2-ab-(a-b)^2$

$=(a-b)^2-(a-b)^2$

$=0$

Answer: Option a: 0.

Key concepts used: Key pattern identification -- efficient simplification.

Q4. If $\displaystyle\frac{x^{24}+1}{x^{12}}=7$, then the value of $\displaystyle\frac{x^{72}+1}{x^{36}}$ is,

- 433

- 343

- 322

- 432

Solution 4 - Problem analysis and execution

Using component expression substitution if we substitute $x^{12}=p$, both the given expression and the target expression are simplified.

The given expression,

$\displaystyle\frac{x^{24}+1}{x^{12}}=7$

Or, $\displaystyle\frac{p^2+1}{p}=7$,

Or, $p+\displaystyle\frac{1}{p}=7$.

Similarly the target expression is also simplified,

$E=\displaystyle\frac{x^{72}+1}{x^{36}}$,

$=\displaystyle\frac{p^6+1}{p^3}$,

$=p^3+\displaystyle\frac{1}{p^3}$.

This is now a simplified familiar problem.

We have transformed given expression,

$p+\displaystyle\frac{1}{p}=7$,

Or, $p^2+2+\displaystyle\frac{1}{p^2}=49$,

Or, $p^2-1+\displaystyle\frac{1}{p^2}=46$.

So transformed target expression is,

$E=p^3+\displaystyle\frac{1}{p^3}$

$=\left(p+\displaystyle\frac{1}{p}\right)\left(p^2-1+\displaystyle\frac{1}{p^2}\right)$

$=7\times{46}$

$=322$.

Answer: Option c: 322.

Key concepts used: Key pattern identification -- component expression substitution -- solving a simpler problem -- Input transformation -- target transformation -- principle of interaction of inverses.

Q5. If $x^2+x=5$, then the value of $(x+3)^3+\displaystyle\frac{1}{(x+3)^3}$ is,

- 140

- 130

- 120

- 110

Solution 5 - Problem analysis and execution

By end state analysis, we need to transform the input expression in terms of $(x+3)$ first,

$x^2+x=5$,

Or, $(x^2+6x+9)-6x+x-9-5=0$,

Or, $(x+3)^2-5x-14=0$,

Or, $(x+3)^2-5(x+3)+15-14=0$,

Or, $(x+3)^2+1=5(x+3)$,

Or, $(x+3)+\displaystyle\frac{1}{(x+3)}=5$,

Or, $p+\displaystyle\frac{1}{p}=5$, with component expression substitution of $p=(x+3)$,

Or, $p^2-1+\displaystyle\frac{1}{p^2}=5^2-3=22$.

So the transformed target expression is,

$E=(x+3)^3+\displaystyle\frac{1}{(x+3)^3}$

$=p^3+\displaystyle\frac{1}{p^3}$

$=\left(p+\displaystyle\frac{1}{p}\right)\left(p^2-1+\displaystyle\frac{1}{p^2}\right)$

$=5\times{22}$

$=110$.

Answer: Option d: 110.

Key concepts used: Key pattern identification -- End state analysis -- input transformation -- component expression substitution -- principle of interaction of inverses.

Q6. If $\displaystyle\frac{p^2}{q^2}+\displaystyle\frac{q^2}{p^2}=1$, then the value of $(p^6+q^6)$ is,

- $0$

- $2$

- $3$

- $1$

Solution 6 - Problem analysis and execution

The given expression,

$\displaystyle\frac{p^2}{q^2}+\displaystyle\frac{q^2}{p^2}=1$,

Or, $p^4+q^4-p^2q^2=0$.

The target expression,

$E=p^6+q^6$

$=(p^2+q^2)(p^4+q^4-p^2q^2)$

$=0$.

Answer: Option a : 0.

Key concepts used: Key pattern identification that target expression is sum of cubes of $p^2$ and $q^2$ and input transformation to get the second factor of target expression as 0.

Q7. If $x=\displaystyle\frac{\sqrt{3}+\sqrt{2}}{\sqrt{3}-\sqrt{2}}$, then the value of $x^3+\displaystyle\frac{1}{x^3}$ is,

- $1000$

- $970$

- $5$

- $98$

Solution 7 - Problem analysis

The target expression being in sum of cubes of inverses, the input expression needs to be converted in sum of inverses form,

$x+\displaystyle\frac{1}{x}=\displaystyle\frac{\sqrt{3}+\sqrt{2}}{\sqrt{3}-\sqrt{2}}+\displaystyle\frac{\sqrt{3}-\sqrt{2}}{\sqrt{3}+\sqrt{2}}$

$=(\sqrt{3}+\sqrt{2})^2+(\sqrt{3}-\sqrt{2})^2$, the denominators when multiplied results in 1

$=10$, the middle terms are cancelled out.

So,

$x^2+2+\displaystyle\frac{1}{x^2}=100$,

Or, $x^2-1+\displaystyle\frac{1}{x^2}=100-3=97$.

Thus the target expression evaluates to,

$E=10\times{97}=970$.

Answer: Option b: 970

Key concepts used: Key pattern identificatoion -- End state analysis -- Deductive reasoning -- input transformation to match target expression form -- principle of interaction of inverses.

Q8. If $x=\displaystyle\frac{a-b}{a+b}$, $y=\displaystyle\frac{b-c}{b+c}$, and $z=\displaystyle\frac{c-a}{c+a}$, then the value of $\displaystyle\frac{(1-x)(1-y)(1-z)}{(1+x)(1+y)(1+z)}$ is,

- $0$

- $1$

- $\displaystyle\frac{1}{2}$

- $2$

Solution 8 - Problem analysis and execution

By componendo dividendo on the three given expressions we have,

$x=\displaystyle\frac{a-b}{a+b}$,

Or, $\displaystyle\frac{1-x}{1+x}=\frac{b}{a}$.

$y=\displaystyle\frac{b-c}{b+c}$

Or, $\displaystyle\frac{1-y}{1+y}=\frac{c}{b}$.

$z=\displaystyle\frac{c-a}{c+a}$

Or, $\displaystyle\frac{1-z}{1+z}=\frac{a}{c}$.

Multiplying the three,

$\displaystyle\frac{(1-x)(1-y)(1-z)}{(1+x)(1+y)(1+z)}=1$.

Answer: Option b: $1$.

Key concepts used: Problem analysis -- Deductive reasoning -- Key pattern identification -- End state analysis -- Componendo dividendo technique -- Input transformation -- Principle of collection of friendly terms.

Q9. If $m=-4$, and $n=-2$, then the value of $m^3-3m^2+3m+3n+3n^2+n^3$ is equal to,

- $-124$

- $124$

- $126$

- $-126$

Solution 9 - Problem analysis

The target expression,

$E=m^3-3m^2+3m+3n+3n^2+n^3$

$=(m-1)^3+1+(n+1)^3-1$

$=(-5)^3+(-1)^3$

$=-126$.

Answer: Option d: $-126$.

Key concepts used: Key pattern identification -- Principle of collection of friendly terms -- Efficient simplification.

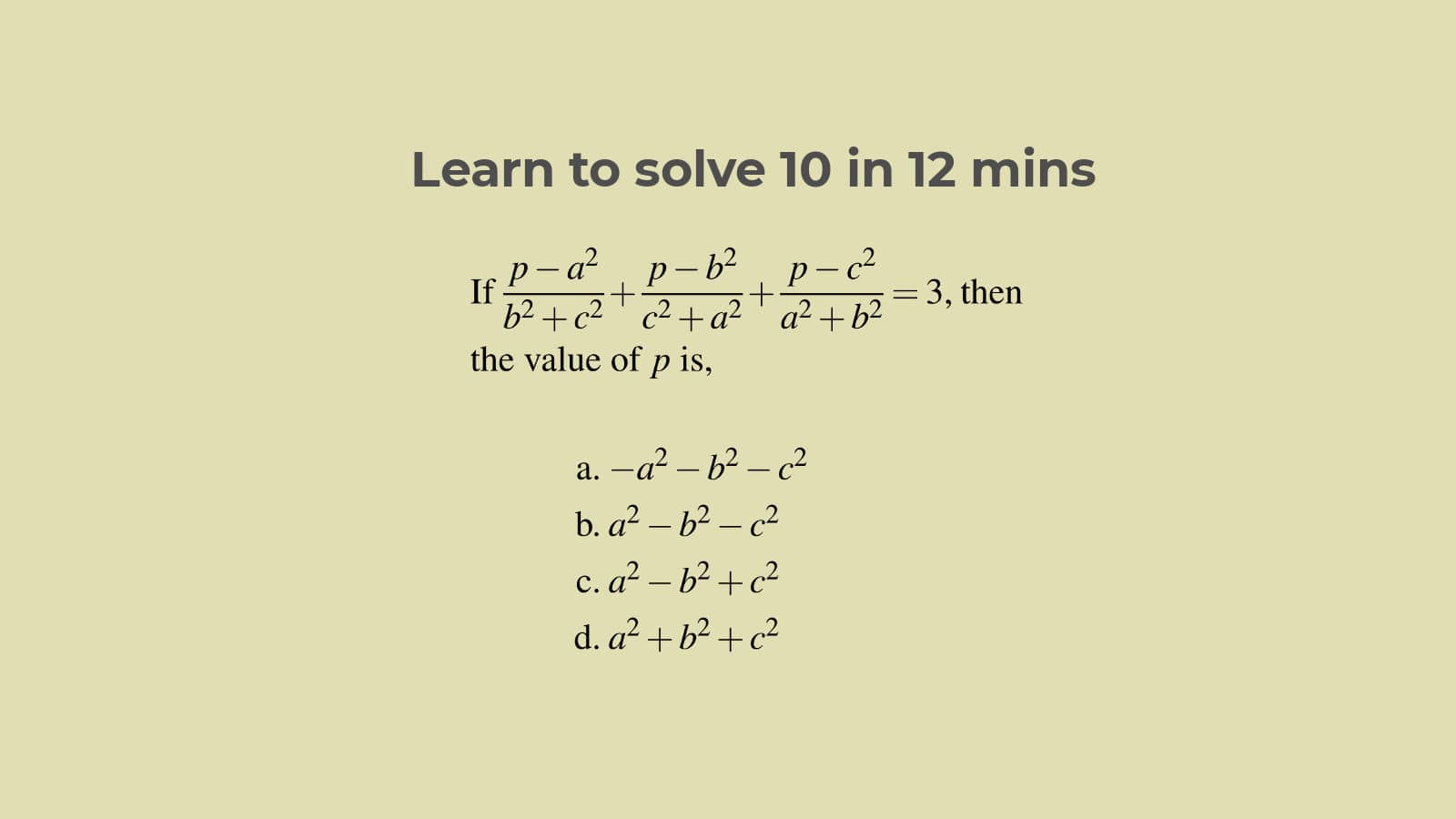

Q10. If $\displaystyle\frac{p-a^2}{b^2+c^2}+\displaystyle\frac{p-b^2}{c^2+a^2}+\displaystyle\frac{p-c^2}{a^2+b^2}=3$, then the value of $p$ is,

- $-a^2-b^2-c^2$

- $a^2-b^2-c^2$

- $a^2-b^2+c^2$

- $a^2+b^2+c^2$

Solution 10: Problem analysis and instant solution by choice value trial

By free resource use of the choice values when we look at the options it is immediately apparent that substitution of $p=a^2+b^2+c^2$ in each of the terms cancels out the denominators and results in 3, the RHS, thus satisfying the equation. This is an instant solution bypassing any mathematical reasoning.

If you do not want to try out the choice values, you have to use either mathematical reasoning or advanced pattern use.

Advantage of these two approaches is—you get to know more about this special type of algebraic expression structure that should help you in future problem solving.

Both the solutions being mental solutions and sufficiently fast, you can choose either of these more conceptual methods.

First Alternate Solution 10: Secondary resource sharing between primary terms

You can comfortably classify the three terms in the LHS as the primary terms and the numeric term 3 on the RHS as the secondary term. The primary terms are similar in structure but the secondary term is totally different. As it is a multiple of 3, it strikes you—can it be split and shared equally between the primary terms! Result will be subtracting 1 from each of the three primary terms leading to equal value numerator of $p-(a^2+b^2+c^2)$. With RHS becoming 0, answer will just be, $p=a^2+b^2+c^2$.

Let us show you.

We split the 3 in RHS into three 1s and combine each with one of the three LHS terms,

$\displaystyle\frac{p-a^2}{b^2+c^2}+\displaystyle\frac{p-b^2}{c^2+a^2}+\displaystyle\frac{p-c^2}{a^2+b^2}=3$,

Or, $\displaystyle\frac{p-a^2}{b^2+c^2}-1+\displaystyle\frac{p-b^2}{c^2+a^2}-1+\displaystyle\frac{p-c^2}{a^2+b^2}-1=0$,

Or, $\displaystyle\frac{p-(a^2+b^2+c^2)}{b^2+c^2}+\displaystyle\frac{p-(a^2+b^2+c^2)}{c^2+a^2}+\displaystyle\frac{p-(a^2+b^2+c^2)}{a^2+b^2}=0$,

Or, $\left[p-(a^2+b^2+c^2)\right]\left[\displaystyle\frac{1}{b^2+c^2}+\displaystyle\frac{1}{c^2+a^2}+\displaystyle\frac{1}{a^2+b^2}\right]=0$,

As each term of the second factor are in squares, the first factor must be zero, that is,

$p-(a^2+b^2+c^2)=0$,

Or, $p=a^2+b^2+c^2$.

This solution uses the usual pattern and method of secondary term value sharing between the primary terms on the LHS and can easily be carried out wholly in mind.

Second Alternate solution 10: Solution by using missing element pattern, numerator equalization and mathematical reasoning

This solution uses more general concepts and methods and can also be carried out wholly in mind.

Fraction addition on the LHS terms being infeasible, most promising approach to combine and simplify the expressions must be to equalize the numerators and reduce the RHS to 0. This is use of numerator equalization. This mathematical reasoning approach is never failing and is strategic.

It is also identified that each of the three denominators has one term missing from the complete set of $a^2+b^2+c^2$. Incidentally, the missing term is present in the numerator of each term—$a^2$, $b^2$ and $c^2$ for the first, second and third terms respectively.

Naturally you deduce that subtracting 1 from each LHS term would make the numerators equal, meeting our original objective. Most conveniently the resource 3 to be shared equally for this subtraction waits all the time on the RHS.

This is use of missing element pattern which also is never failing.

Answer: Option d: $a^2+b^2+c^2$.

Key concepts used: Symmetric expression -- Secondary resource of RHS value sharing between LHS terms -- Principle of free resource use -- Many ways technique -- Key pattern identification -- Factorization -- Sum of square terms property -- Missing element pattern -- Numerator equalization.

Guided help on Algebra in Suresolv

To get the best results out of the extensive range of articles of tutorials, questions and solutions on Algebra in Suresolv, follow the guide,

The guide list of articles includes ALL articles on Algebra in Suresolv and is up-to-date.